AMR PAR

From HP-SEE Wiki

General Information

- Application's name: Parallel algorithm and program for the solving of continuum mechanics equations using Adaptive Mesh Refinement

- Application's acronym: AMR_PAR

- Virtual Research Community: VRC "Computational Physics"

- Scientific contact: Boris RYBAKIN, rybakin@math.md

- Technical contact: Nicolai ILIUHA, nick@renam.md

- Developers: Dr. habil. Boris RYBAKIN,Institute of Mathematics and Computer Science, Laboratory of Mathematical Modelling, Republic of Moldova

- Web site: http://www.math.md/en/

Short Description

Many complex problems of continuum mechanics are numerically solved on structured or unstructured grids. To improve the accuracy of the calculations is necessary to choose a sufficiently small grid (with a small cell size). This leads to the drawback of a substantial increase of computation time. Therefore, for the calculations of complex problems in recent years the method of Adaptive Mesh Refinement (AMR) is applied. That is, the grid refinement is performed only in the areas of interest of the structure, where e.g. the shock waves are generated, or a complex geometry or other such features exist. Thus, the computing time is greatly reduced. In addition, the execution of the application on the resulting sequence of nested, decreasing nets can be parallelized. For the arrays with dimension up to 128x128x128 the application AMR_PAR can still be executed in Grid. For higher-dimensional arrays, which are of practical interest, the delays in sending messages is greatly increasing the run time of the application, and the use of the HPC solution becomes mandatory.

Problems Solved

We consider a continuum mechanics problem, such as the problem of modeling the explosion of a supernova type II and, for this example, create an algorithm using the method of AMR and build a parallel program. Then the results of the calculation of specified problem of blast are visualized.

Scientific and Social Impact

This method can be applied to any other nowaday problem of continuum mechanics - to calculate the aerodynamics of aircraft, the calculations of the air flow of cars, a large number of other problems of mathematical modeling - calculation of the flow of blood through the vessels, the calculations of the heart valves, etc. Hence, the practical use – the calculation of complex problems in a reasonable time. In all these cases, at the beginning of the problem we define a way to highlight areas in which need to construct the grid, then the program builds a sequence of grids and makes a decision on them. The social impact depends on the problem to be solved, the use of AMR_PAR being of interest for heavy industry (e.g. car body design and development, aircraft aerodynamics), or for healthcare industry.

Collaborations

- SIISI RAS, Moscow, Russia

Beneficiaries

- Main beneficiaries are research groups in Computational Mathematics and Computational Astrophysics.

Number of users

5

Development Plan

- Concept: The concept was done before the project started

- Start of alpha stage: M01. Construction of an algorithm. Creating of the program.

- Start of beta stage: M6. Parallelization and Debugging of the application.

- Start of testing stage: M8. Testing on multiprocessor platforms.

- Start of deployment stage: M10. Performing calculations, debugging of part for results virtualization. Preparing for deployment on a remote sites.

- Start of production stage: 09.2012

Resource Requirements

- Number of cores required for a single run: from 4 to up to 32

- Minimum RAM/core required: 1 Gb

- Storage space during a single run: 1-110 Gb

- Long-term data storage: 500 Gb

- Total core hours required: Unknown

Technical Features and HP-SEE Implementation

- Primary programming language: Intel Fortran 11.0

- Parallel programming paradigm: Open MP

- Main parallel code: Open MP

- Pre/post processing code: Own developer

- Application tools and libraries: OpenMP, Intel MKL10.1

Usage Example

Infrastructure Usage

- Home system: SGI UltraViolet 1000 supercomputer at NIIFI, located in Pecs, Hungary

- Applied for access on: 10.2011

- Access granted on: 10.2011

- Achieved scalability: 8 cores

- Accessed production systems:

- SGI UltraViolet 1000 supercomputer at NIIFI, located in Pecs, Hungary

- Applied for access on: 10.2011

- Access granted on: 10.2011

- Achieved scalability: 8 cores

- HPCG cluster located at IICT of Bulgarian Academy of Sciences

- Applied for access on: 10.2010

- Access granted on: 10.2010

- Achieved scalability: 8 cores

- Porting activities: Interoperability Testing of application code. Compiled application was tested on front-end computers of 1 and 2 resource centers

- Scalability studies: Application scalable problems were found during tests.

Running on Several HP-SEE Centres

- Benchmarking activities and results:

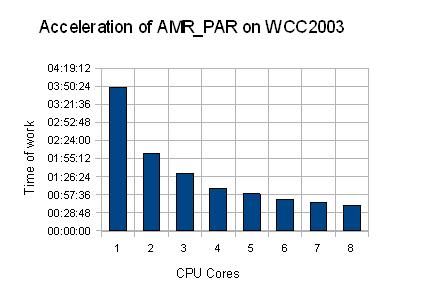

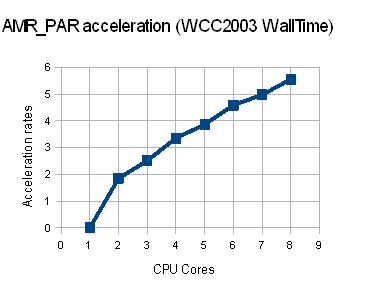

AMR_PAR application in OpenMP mode was tested on MS Windows Compute Cluster 2003.

1. Calculations on Cluster Node without virtualization (4 Cores, 4 Gb RAM) – from 1 to 8 cores were used. CPUs: 2xQuadCore Intel Xeon E5335 2,0 GHz

| Number of Cores | Job Start | Job End | Job Wall Time | Acceleration | Time of calculations. (AMR_PAR internal counter) | Acceleration | Deviation WallTime/Counter |

| 1 Core | 11:04:18 | 14:53:27 | 03:49:09 | 0 | 13600,2342734337 | 0 | 0 |

| 2 Cores | 17:23:59 | 19:27:49 | 02:03:50 | 1,85047106325707 | 7281,81191015244 | 1,86769919921606 | 0,0172281359589961 |

| 3 Cores | 15:52:42 | 17:23:59 | 01:31:17 | 2,51031586635019 | 5330,05323433876 | 2,55161321575823 | 0,0412973494080458 |

| 4 Cores | 19:27:49 | 20:36:07 | 01:08:18 | 3,35505124450951 | 3947,8009238243 | 3,4450152213 | 0,0899639768 |

| 5 Cores | 14:53:27 | 15:52:42 | 00:59:15 | 3,3550512445 | 3947,80092382431 | 3,44501522134984 | 0,0899639768403286 |

| 6 Cores | 10:14:22 | 11:04:18 | 00:49:56 | 4,58911882510013 | 2848,70888280869 | 4,77417483952398 | 0,185056014423846 |

| 7 Cores | 09:28:19 | 10:14:22 | 00:46:03 | 4,97611292073833 | 2616,34595894814 | 5,198178867332 | 0,222065946593675 |

| 8 Cores | 08:00:06 | 08:41:19 | 00:41:13 | 5,55964415689446 | 2328,52992773056 | 5,84069550125508 | 0,281051344360622 |

Datagrams of acceleration of AMR_PAR running in OpenMP mode on MS Windows Compute Cluster 2003:

2. Calculations on Cluster Node with virtualization on Virtual Machine (4 Cores, 4 Gb RAM) – from 1 to 4 cores were used. CPUs: 2xQuadCore Intel Xeon E5335 2,0 GHz

| Number of Cores | Job Start | Job End | Job Wall Time | Acceleration | Time of calculations. (AMR_PAR internal counter) | Acceleration | Deviation WallTime/Counter |

| 1 VCPU | 16:07:30 | 19:59:04 | 03:51:34 | 0 | 13734,7187187672 | 0 | 0 |

| 2 VCPUs | 19:59:05 | 22:04:53 | 02:05:48 | 1,84075251722311 | 7385,03980076313 | 1,85980293800826 | 0,0190504207851538 |

| 3 VCPUs | 22:04:54 | 23:37:34 | 01:32:40 | 2,49892086330936 | 5398,67809760571 | 2,54408921414642 | 0,0451683508370646 |

| 4 VCPUs | 23:37:35 | 24:46:37 | 01:09:37 | 3,35441815548045 | 3980,13488471508 | 3,45081740106665 | 0,0963992455861962 |

3. Comparison of the results of the application AMR_PAR running without and with the use of virtualization.

| 08:39:04 | Total time of 4 calculations with Virtualization. Application run on WCC2003 on Virtual Machine under Citrix XenServer 5.6.(4 VCPU, 4 Gb RAM) |

| 08:32:34 | Total time of 4 calculations without Virtualization. WCC2003 was installed on bare metal. (4 CPU, 4 Gb RAM) |

| 00:06:30 | Difference. 1,27% Slowdown introduced by the virtualization platform (for this application). |

Achieved Results

64-bit AMR_PAR application was developed in MS Visual Studio 2010. Before porting to HPC clusters or supercomputer infrastructure the application was ported to and tested on Virtual Machine (4 cores, 4 Gb RAM) with Scientific Linux 5.5 and Intel(R) Parallel Studio XE 2011.

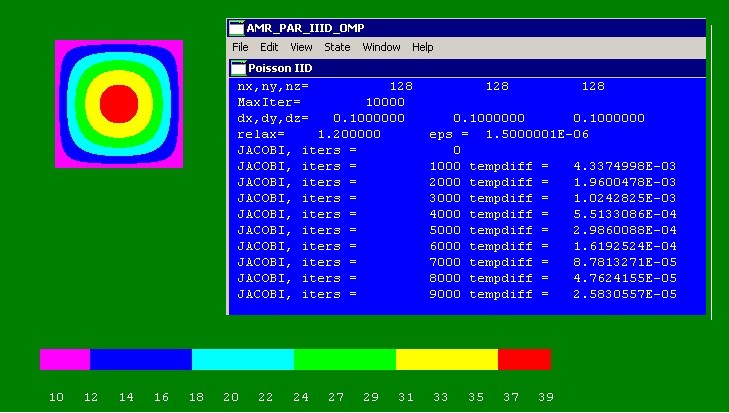

AMR_PAR application in OpenMP mode was tested on small AMR grids (128x128x128 cells, 5 layers)

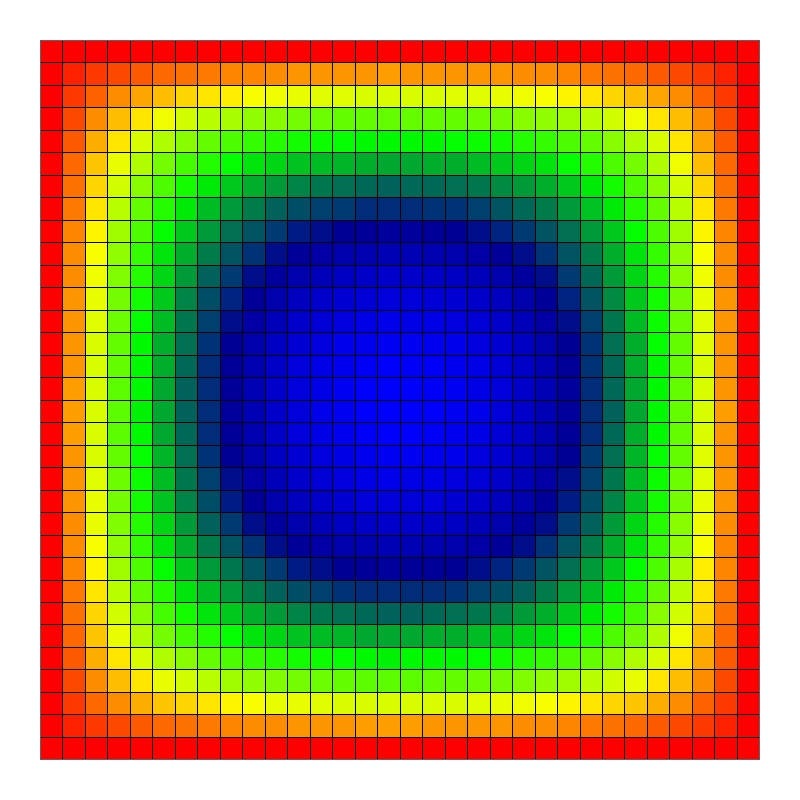

2-D visualization of AMR_PAR application's results - the solution of the Poisson equation with zero boundary conditions on a square grid 128x128x128, with 5 levels of grind

2-D visualization of AMR_PAR application's results - the solution of the Poisson equation with zero boundary conditions on a square grid 32x32x32, with 5 levels of grind

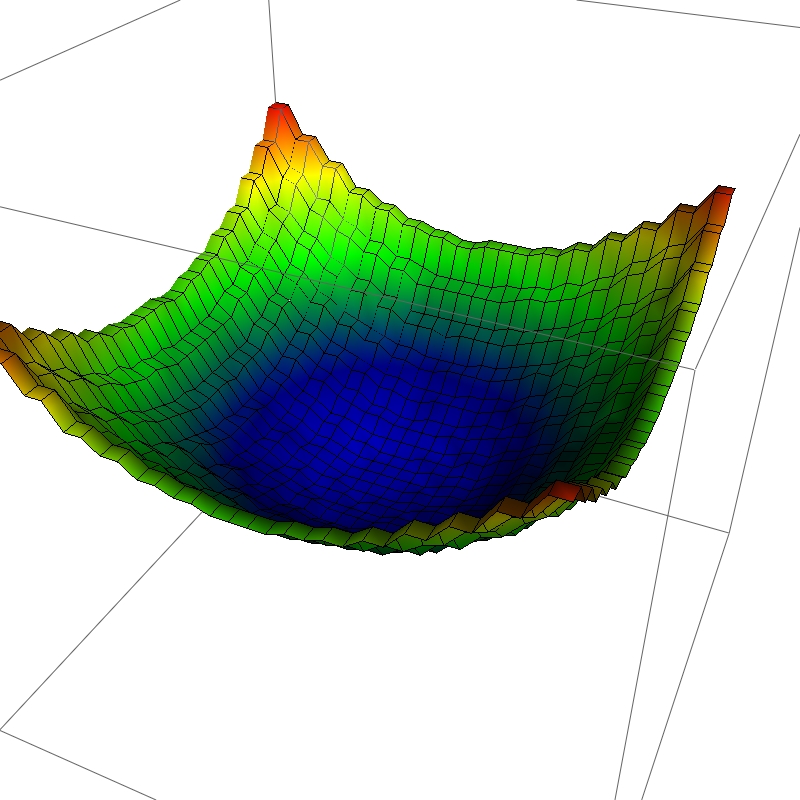

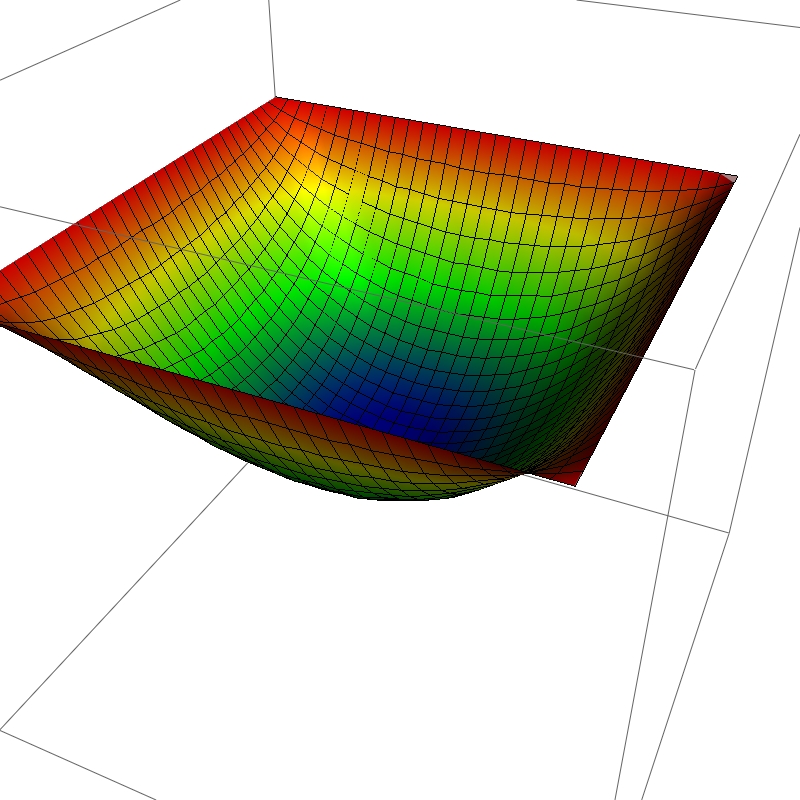

3-D visualization of AMR_PAR application's results - the solution of the Poisson equation with zero boundary conditions on a square grid 32x32x32, with 5 levels of grind

Publications

- RYBAKIN, B.; STRELNIKOVA, E.; SECRIERU, G.; GUTULEAC, E. Computer simulation of dynamic loading of fluid-filled shell structures. În: Rezumatele Conferenţei „Mathematics & Information technologies: Research and Education (MITRE-2011)”, Chişinău, 22-25 August 2011, 102. ISBN 978-9975-71-144-9

- РЫБАКИН, Б.П. Решение III-d задач газовой динамики на графических ускорителях (Modeling of III-d gas dynamics problems on graphics accelerators). Труды Международной суперкомпьютерной конференции «Научный сервис в сети Интернет: экзафлопсное будущее (Scientific Services in Internet: Exaflops Future)». Россия, г. Новороссийск, 2011, pp. 321-325 (in Russian).

- РЫБАКИН, Б.П.; СТАМОВ, Л.И. Использование многопроцессорных вычислительных систем и графических ускорителей для моделирования задач газодинамики (Modeling of III-D problems of gas dynamics on multiprocessing computers and GPU). Труды Международной суперкомпьютерной конференции «Научный сервис в сети Интернет: экзафлопсное будущее (Scientific Services in Internet: Exaflops Future)». Россия, г. Новороссийск, 2011, pp. 84-89 (in Russian).

- РЫБАКИН, Б.П.; ЕГОРОВА, Е.В. Решение задач газовой динамики на графических ускорителях (Modeling of gas dynamics problems on graphics accelerators). Труды Международной суперкомпьютерной конференции «Научный сервис в сети Интернет: экзафлопсное будущее (Scientific Services in Internet: Exaflops Future)». Россия, г. Новороссийск, 2011, pp. 326-331 (in Russian).

- RYBAKIN, B.; SECRIERU, G.; GUTULEAC, E. Research of the intense-deformed condition of elastic-plastic shells under the influence of intensive dynamic loadings. In: The 19-th Edition of the Annual Conference on Applied and Industrial Mathematics- CAIM, Iaşi, September 22-25, 2011, p. 50, ISSN 1841-5512.

- B.P. RYBAKIN. Modeling of III-D problems of gas dynamics on multiprocessing computers and GPU. In: Computers & Fluids, Elsevier, January 2012, http://dx.doi.org/10.1016/j.compfluid.2012.01.016.

Foreseen Activities

- After calculation of the necessary amount of RAM for grid dimensions up to 2048x2048x2048 cells, as home cluster for the application porting was proposed the SGI UltraViolet 1000 supercomputer at the National Information Infrastructure Development Institute, located in Pecs City, Hungary (SMP, 1152 cores and 6057 GB RAM). For small grids (up to 384x384x384 cells) resources of HPCG can be used.

1. Benchmark results and forecasting demands for resources

| Dimension | Cores | RAM Gb | CPU min | WallTime min |

| 128x128x128 | 4 | 0,789 | 28 | 3,5 |

| 256х256х256 | 8 | 6,062 | 527 | 66 |

| 512x512x512 | 12-16 | ~ 55,6 | ~ 7 800 | ~ 1 020 |

| 1024x1024x1024 | 16 — 32 | ~ 415 | ~ 120 000 | ~ 14 880 |

| 2048x2048x2048 | 32 — 64 | ~ 3250 | ~ 1 728 000 | ~ 221 760 |

- For further optimization of AMR_PAR application, we plan collecting statistics of calculations’ acceleration dependences from different number of cores - up to 32 (or more). It is necessary to produce investigations to find optimal number of cores for fastest calculations for large-scale grid dimensions. As a result of this research we plan to modify the application to use OpenMP more effectively.

- Next step is to run application using HP-SEE regional resources for large-scale grid dimensions – up to 2048x2048x2048 and 5-7 layers. For this purpose the application was adopted to run on SGI UltraViolet 1000 supercomputer at the National Information Infrastructure Development Institute, located in Pecs City, Hungary (SMP, 1152 cores and 6057 GB RAM). After obtaining results of the modified application execution, it will be possible to make new benchmarking (due to long time of forecast calculations) and propose new recommendations for application optimization. Updated application also will allow describing and visualization of results in a form of 2-D images and 3-D models.